Advantages of Streaming Microservices Architecture

Share This Article

Table of Contents

Subscribe to Our Blog

We're committed to your privacy. SayOne uses the information you provide to us to contact you about our relevant content, products, and services. check out our privacy policy.

Microservices are more popular today and they are nothing but smaller modular services that are loosely coupled and capable of functioning independently. However, today’s advanced microservices require both in-memory and streaming capabilities for their smooth functioning. In this article we attempt to elaborate a little more on how streaming microservices architecture can help.

Streaming Microservices Architecture – Is it Necessary

There are many applications that are today implemented as containerized microservices pipelines. For this to function well, what is most required is uninterrupted, versatile, and smooth inter-service communications. The technology used for this purpose must be able to scale itself to more than thousands of transactions per second compared to the fewer numbers of the traditional setup. This helps to achieve the advantages of the microservices architecture.

Data Pipelines

Ideally every micorservice performs best when it functions completely independently of the other services. This helps separate teams optimize the performance of every service independently. A well-executed microservices system can boast f many advantages, including a simplified development and testing environment, greater agility and scalability, more granular service monitoring, less disruptive integration of new and enhanced capabilities, and troubleshooting.

Do you want to know the problems you will encounter when you move into microservices architecture?

Containers are seen as the best technology for running microservices today. They have less overhead and make good use of available resources. This enables microservices to be served at peak performance. Moreover, use of containers allows microservices to be developed without the use of dedicated and extensive hardware, sometimes with just the use of a personal computer.

However, microservices in containers require efficient and uninterrupted interservices communication. A failure in this aspect can introduce problems such as application-level failures and poor performance. This problem also troubles most of the traditional architectures when the volume and velocity of data is very high.

A Streaming Microservices Architecture example

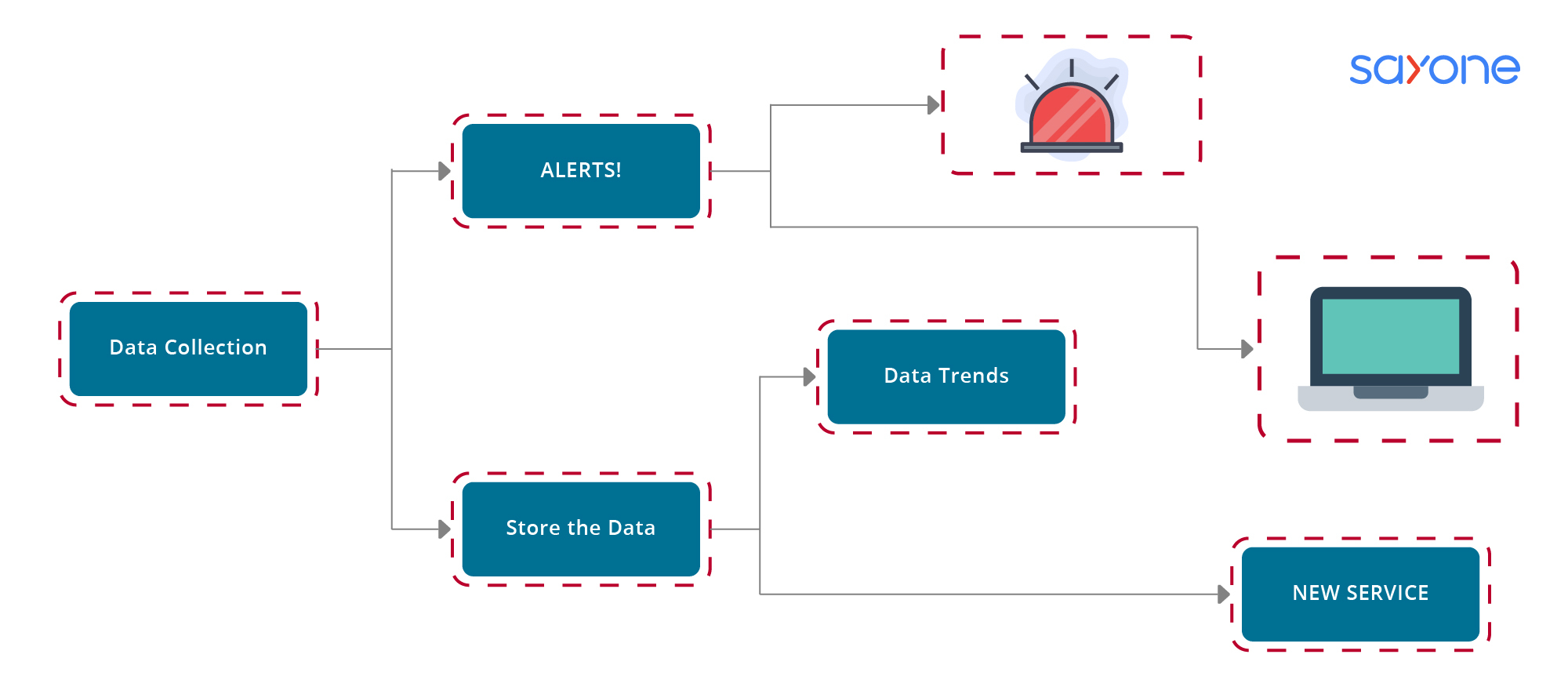

Consider a simple example of an IoT setup as shown in the figure.

Even a simple IoT application requires a considerable amount of inter-services communication to function without any failures. In this application, any of the data collected is diverted via two paths all at once: one for analysis and one for real-time alerts. These two converge later as display data on a dashboard.

If there is no consistent inter-service communications, the independence of the services can be hampered whenever there is a modification brought in for a service or when a new service is added. The two basic means of passing data/messages among different services are(1) queuing and (2)publish-subscribe.

In the queuing method, data sent by one service is received by another and this method also allows for preservation of transactional state and the security of the data. However, it is difficult to scale using this method even when the application is simple.

Are you looking for a reliable and experienced microservices vendor? Call SayOne or drop us a note!

In the second publish-subscribe option, data is streamed/broadcast from a streaming service and data is available to multiple subscribing services at the same time. The data structures and types require being flexible to some extent when moving through pipelines when events or stateful messages have to be consumed b the various microservices.

Publish/subscribe streaming platforms do not enforce any specific data type or schema, and this agnostic nature helps developers choose the most suitable data type/structure for the application/service. The above two approaches have now been combined to enable a distributed streaming platform that is both scalable and secure for multiple publishers and subscribers. It is also created in a manner so that it is simple to use in containerized microservices architecture.

Streaming Microservices Platfrom

The distributed streaming platform has three predominant characteristics:

- Publish and subscribe to message streams in a manner that is similar to how a messaging system works.

- Persist or store message streams in a fault-tolerant method.

- Process streams in real-time as and when they occur.

The data streams can also be described as being pervasive, persistent and performant. By being pervasive, it is meant that any microservice can publish data/messages in a stream. Also any microservice can receive or subscribe to any data stream. A persistent data stream is one in which there is no requirement for every microservice to ensure that the published data stream is properly stored. The streaming platform is simply a record system where all the data is easily replicated/ replayed when required. By being performant it is meant that the lightweight protocols that are involved have the capability to process approximately 150000 records/second for every containerized microservice that is present, and that this capability can be scaled horizontally when more containers are added.

Download our EBook for Free "Porting from Monolith to Microservices – Is the Shift Worth It".

A top advantage of publish/subscribe type of streaming platforms is because of the "decoupled" nature of the communications. This loose structure means the publishers do not have to track or even be aware of any of the subscribers. Moreover, any and all of the subscribers have access to any of the published streams. Therefore, it is possible to add new publishers and subscribers without disruption to any of the microservices already present.

Pipelines at the application level are created by chaining together many microservices, each of them subscribing to any data stream it requires to perform its specified function. Also each microservice can publish its own data stream for use by any of the other microservices. Scaling an application to be able to handle higher data volumes can be easily done by starting up additional containers wherever there are bottlenecks.

Streaming Microservices Architecture tutorial

A streaming microservice architecture is a type of service-oriented architecture (SOA) that enables developers to build services that stream data from a single source to multiple consumers. It is used to create applications that have high availability, scalability, and resiliency, while supporting a large number of concurrent users.

The primary building blocks of a streaming microservice architecture are the source, which is the source of the data, and the consumer, which is the recipient of the data. The source and consumer are connected by a message bus, which is an asynchronous messaging system. This message bus facilitates the sharing of data between the source and consumer, allowing each to respond to events that occur in the system.

To create a streaming microservice architecture, developers must first identify the services that will be part of the system. Each service should be designed to perform a specific task, such as processing data, managing user accounts, or sending notifications. Once the services have been identified, developers must create an interface for each service to communicate with other services in the system. This interface should be based on a messaging protocol, such as AMQP or MQTT.

Read our blog "Microservices Database Management – What You Should Know"

Once the services are in place, developers must create a data pipeline. This is a sequence of services that process the data from the source and forward it to the consumer. In most cases, the data pipeline consists of a series of stages, each of which performs a specific task. For example, the first stage may filter the data, the second stage may enrich the data, and the third stage may forward the data to the consumer.

Finally, developers must create a system for monitoring and managing the streaming microservices architecture. This includes setting up logging and analytics, as well as setting up alerts to notify developers of any errors or issues.

By using a streaming microservice architecture, developers can create applications that are highly scalable, resilient, and available. This makes it ideal for applications that require real-time data processing, such as streaming media, online gaming, and financial trading.

Advantages of the streaming platform:

- It supports even the most demanding platforms

- Uses only one API for ‘publish to’ and ‘subscribe from’

- Connection can be established from any microservice to another and still maintain the independence

- Compatible with containers for scaling, and there is a need to create new images

- Problems are minimal and consequently troubleshooting is also less

In the example above, each microservice can publish/subscribe to many other microservices. Moreover, new services can be added without disrupting the existing microservices. The most challenging application is always the consumer-facing web services. Transactions can run into millions per hour with no provisions for loss of transactions from geographically dispersed data centers. Some of these centers may be on-premise, while others can be on the cloud.

Popularity of Streaming Microservices Architecture

The distributed streaming platform serves to fulfil most of the promises of the microservices architecture. Combining messaging, storage, and stream processing using a single, lightweight solution is the key. Combining the containerized microservices architecture with the simple and robust messaging from a streaming platform allows organizations to greatly enhance agility of software builds, deployment and maintenance of data pipelines that are required for the most demanding applications. In fact, approximately 88% of microservices users agree or agree completely that microservices offer many benefits to development teams.

Various commercial offerings now incorporate streaming platforms along with other desirable capabilities, including a database, file system replication, shared storage, and security in their architectures. The resultant solution is optimized for the implementation of containerized microservices architectures through open APIs.

The streaming platform pilots can be used in a single server first for minimal complexity, and then you can expand to multiple servers (local cluster/cloud) as required. If the pilot has been set up to convert an existing application, the rest of all the inter-service communications can be replaced one at a time minimize complexity.

Takeaway

For large applications that work as a cohesive group of smaller jobs (as microservices), you can use a streaming microservices architecture system that you can continue to enhance and expand for many years into the future.

How SayOne Can Help

At SayOne, our integrated teams of developers service our clients with microservices that are fully aligned to the future of the business or organization. The microservices we design and implement are formulated around the propositions of Agile and DevOps methodologies. Our system model focuses on individual components that are resilient, fortified, and highly reliable.

We design microservices for our clients in a manner that assures future success in terms of scalability and adaptation to the latest technologies. They are also constructed to accept fresh components easily and smoothly, allowing for effective function upgrades in a cost-effective manner.

Are you contemplating shifting to microservices for business growth? Call SayOne today!

Our microservices are constructed with reusable components that offer increased flexibility and offer superior productivity for the organization/business. We work with start-ups, SMBs, and enterprises and help them to visualize the entire microservices journey and also allow for the effective coexistence of legacy systems of the organization.

Our microservices are developed for agility, efficient performance and maintenance, enhanced performance, scalability, and security.

Share This Article

FAQs

Streaming microservices architecture is a type of distributed application architecture that integrates multiple microservices into a single system. These microservices communicate with each other through streaming data pipelines, allowing them to process and exchange data in real-time. This type of architecture is often used in cloud-native applications that require high scalability and fast response times.

Streaming microservices architecture enables developers to break down complex applications into individual components that can be independently deployed and managed. This reduces the amount of time and effort required to develop and maintain the application, while also making it easier to scale and respond to changing demands.

One of the main challenges associated with streaming microservices architecture is the potential for increased complexity. There is also a risk of data loss or latency due to the distributed nature of the architecture. Finally, there can be a lack of visibility into the performance of each individual microservice, making it more difficult to identify and fix issues.

Subscribe to Our Blog

We're committed to your privacy. SayOne uses the information you provide to us to contact you about our relevant content, products, and services. check out our privacy policy.