Subscribe to our Blog

We're committed to your privacy. SayOne uses the information you provide to us to contact you about our relevant content, products, and services. check out our privacy policy.

Ranju R October 9, 20238 min read

Generating table of contents...

Microservices, often seen as small service components in software architecture, are designed to be self-sufficient and independent. Each microservice does one thing, and it does it well. They communicate with each other through simple, universally understood protocols, typically over HTTP. By breaking up an application into these smaller components, developers can update, scale, and manage each part without affecting the whole system.

Imagine a string of dominos. If one falls or falters, there can be a cascade of impacts. Similarly, if one microservice lags, the entire application might suffer. Speed and responsiveness are crucial. Slow or unresponsive services can lead to poor user experiences, loss of customers, and reduced business efficiency.

Remember, while microservices can offer flexibility, their real strength shines when they're performant. As you dive deeper into best practices, it's clear: a well-performing microservice system is not just about having them but ensuring they run at their best.

Performance testing is crucial for microservices as it evaluates the system's capability to handle expected and unexpected user loads. By doing so, you can uncover bottlenecks before they become a problem in the production environment.

Thorough performance testing ensures your microservices run smoothly, offering a stellar user experience. The right strategies and tools make the process efficient and effective.

When embarking on the microservices journey, knowing which metrics you should track is pivotal. Identifying the right metrics determine your system's performance. Based on expert insights from the given blogs, here are some focal areas:

Monitoring these metrics provides a clear picture of your microservices' health. When you have a pulse on these metrics, you can identify issues before they become problems and make sure your system scales gracefully with demand.

When building applications with microservices, one potential challenge is latency. As each service communicates over a network, the time it takes for data to travel between services can add up. This challenge intensifies when services are distributed across multiple servers or data centers.

Read More on How you can Improve Latency in Microservices

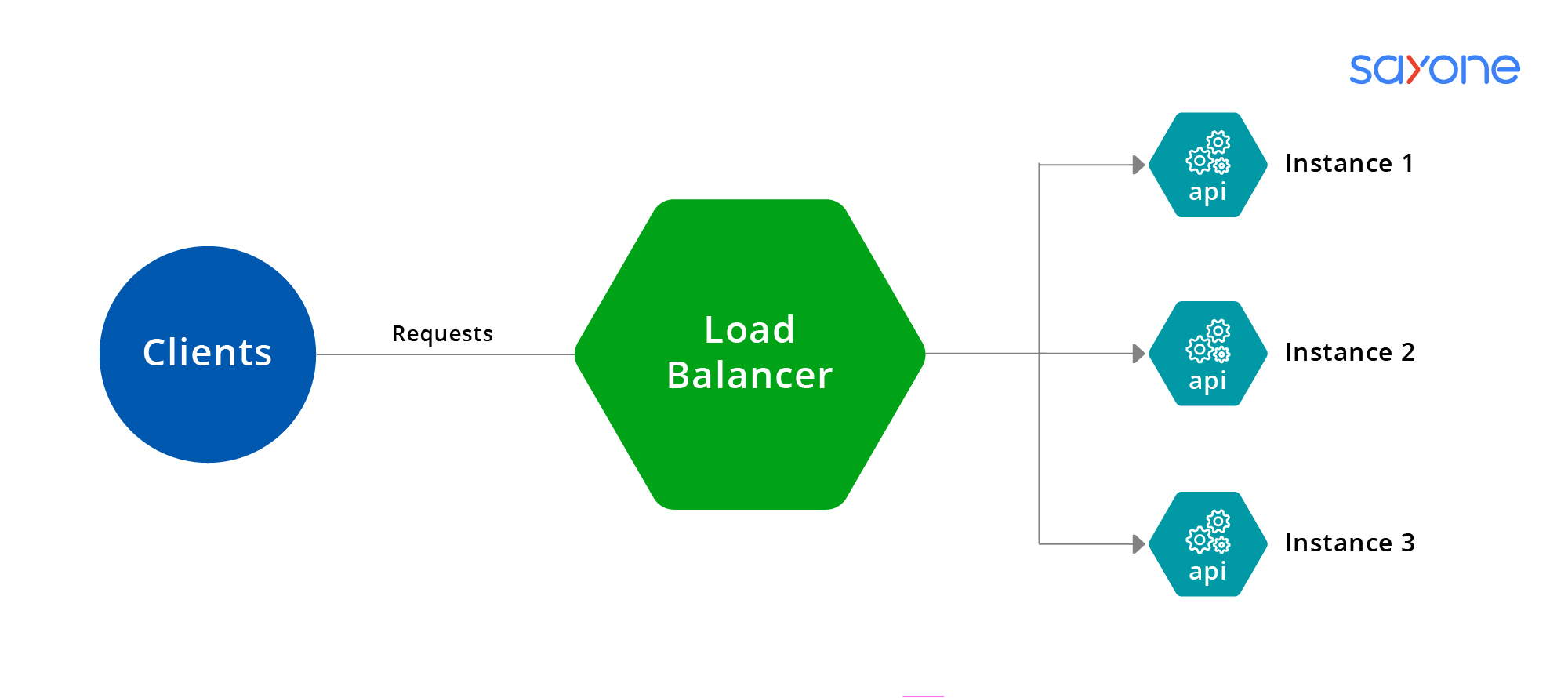

Image: example of server side load balancer

While microservices can introduce latency challenges, with the right strategies, these can be minimized. Proper design choices, from the type of data format used to leveraging caches and load balancers, play a crucial role in achieving the desired performance.

Here is our in depth guide on Load Balancing Microservices Architecture Performance

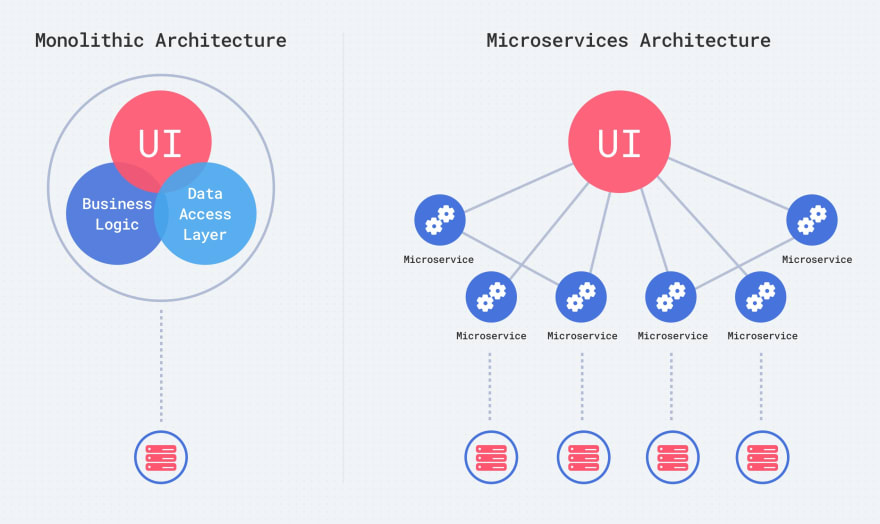

Scaling is a crucial aspect of microservices architecture. To ensure that your microservices-based application can handle increasing requests without compromising performance, it's essential to understand the different scaling approaches and choose the right one.

Monolithic vs Microservice system: When using a microservices system, different parts of the system can be scaled at different times. This method is more effective.

When it comes to microservices, you've got two main scaling methods. Horizontal scaling involves adding more machines to your setup spreading the workload. It's like adding more lanes to a highway. On the other hand, vertical scaling is about beefing up a single machine's capacity, kind of like getting a bigger truck.

Checkout The Complete guide on microservice scaling

Ensuring reliability while improving performance requires implementing robust error handling, retries, and circuit breaker patterns to handle failures gracefully. Additionally, implementing monitoring, logging, and alerting mechanisms allows you to detect and respond to performance issues in real-time, minimizing the impact on users.

Think of auto-scaling as a magic tool that adjusts resources based on demand. When there's a surge in users, auto-scaling ensures your microservices have the necessary capacity by adding more resources. Once the demand drops, it scales down. It's all about flexibility and adapting on the go.

Remember, to get the best out of microservices, it's essential to find the right balance between resources and demand. You can ensure smooth sailing for your software development journey through proper scaling and load-balancing strategies.

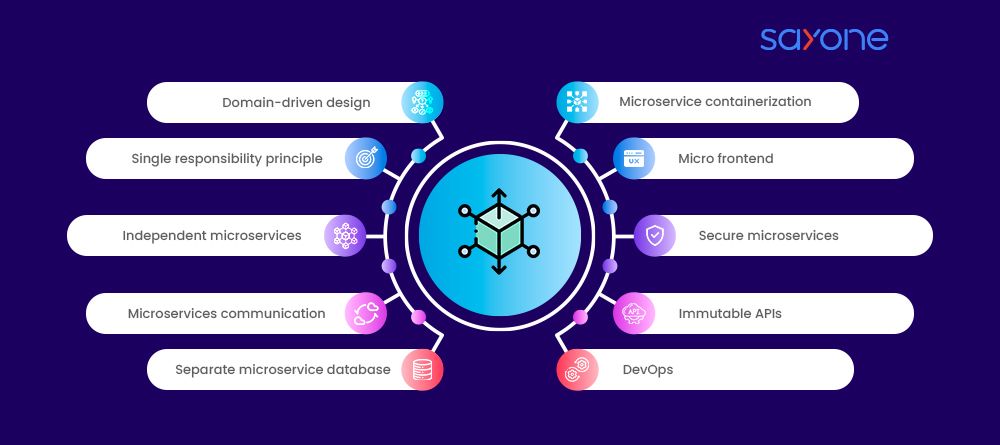

Read More on Domain Driven Design for Microservices

Optimizing microservices isn't just about speeding up the service; it's about making your software more resilient and responsive. Let's talk about the best ways to tune up your microservices!

Start by keeping your codebase clean and concise. Refactor regularly, avoid complex loops and use caching judiciously. Select the right database, but remember that NoSQL databases like MongoDB or Cassandra may fit better for some services, while relational databases like MySQL or PostgreSQL may suit others.

Read More about Java Microservices Architecture - A Complete Guide

Containers, like Docker, offer an isolated environment for your microservice, ensuring consistent performance. Combine this with orchestration tools like Kubernetes, which can efficiently manage, scale, and maintain containers. This combo keeps services responsive, even under high traffic.

Cut down on the chatter! Use response compression techniques like gzip to reduce data size during transmission. Choose the right communication protocol. While REST is popular, consider protocols like gRPC for faster, more compact data exchanges. Always aim for direct service-to-service calls, avoiding unnecessary intermediaries.

Read More about Microservice Communication: A Complete Guide

In wrapping up, it's evident that the performance of microservices isn't just a one-time task but a persistent journey. We've highlighted crucial practices, including understanding bottlenecks, maintaining small and single-purpose microservices, optimizing data handling, and adopting proactive monitoring.

Microservices' performance optimization is akin to tuning a musical instrument. It's not just about setting it up initially but continually adjusting and refining it to maintain harmony. As technology shifts and as your application grows, regularly revisiting your strategy ensures you stay ahead of the curve.

Now, if you're looking to kickstart this journey but need the right expertise to guide you, we are here for you - At SayOneTech, our mastery in crafting microservices-based applications stands out. With our deep-rooted knowledge of agile and DevOps practices, we not only design but consistently provide updates with minimal interruptions.

Seeking to elevate your application's performance? Let SayOneTech be your trusted partner on this journey.

We're committed to your privacy. SayOne uses the information you provide to us to contact you about our relevant content, products, and services. check out our privacy policy.

About Author

Helping businesses scale-up their development teams ( Python, JavaScript, DevOps & Microservices)

We collaborate with visionary leaders on projects that focus on quality